|

Four months ago, we introduced Anthropic’s Claude 3.5 in Amazon Bedrock, raising the industry bar for AI model intelligence while maintaining the speed and cost of Claude 3 Sonnet.

Today, I am excited to announce three new capabilities for the Claude 3.5 model family in Amazon Bedrock:

Upgraded Claude 3.5 Sonnet – You now have access to an upgraded Claude 3.5 Sonnet model that builds upon its predecessor’s strengths, offering even more intelligence at the same cost. Claude 3.5 Sonnet continues to improve its capability to solve real-world software engineering tasks and follow complex, agentic workflows. The upgraded Claude 3.5 Sonnet helps across the entire software development lifecycle, from initial design to bug fixes, maintenance, and optimizations. With these capabilities, the upgraded Claude 3.5 Sonnet model can help build more advanced chatbots with a warm, human-like tone. Other use cases in which the upgraded model excels include knowledge Q&A platforms, data extraction from visuals like charts and diagrams, and automation of repetitive tasks and operations.

Computer use – Claude 3.5 Sonnet now offers computer use capabilities in Amazon Bedrock in public beta, allowing Claude to perceive and interact with computer interfaces. Developers can direct Claude to use computers the way people do: by looking at a screen, moving a cursor, clicking buttons, and typing text. This works by giving the model access to integrated tools that can return computer actions, like keystrokes and mouse clicks, editing text files, and running shell commands. Software developers can integrate computer use in their solutions by building an action-execution layer and grant screen access to Claude 3.5 Sonnet. In this way, software developers can build applications with the ability to perform computer actions, follow multiple steps, and check their results. Computer use opens new possibilities for AI-powered applications. For example, it can help automate software testing and back office tasks and implement more advanced software assistants that can interact with applications. Given this technology is early, developers are encouraged to explore lower-risk tasks and use it in a sandbox environment.

Claude 3.5 Haiku – The new Claude 3.5 Haiku is coming soon and combines rapid response times with improved reasoning capabilities, making it ideal for tasks that require both speed and intelligence. Claude 3.5 Haiku improves on its predecessor and matches the performance of Claude 3 Opus (previously Claude’s largest model) at the speed and cost of Claude 3 Haiku. Claude 3.5 Haiku can help with use cases such as fast and accurate code suggestions, highly interactive chatbots that need rapid response times for customer service, e-commerce solutions, and educational platforms. For customers dealing with large volumes of unstructured data in finance, healthcare, research, and more, Claude 3.5 Haiku can help efficiently process and categorize information.

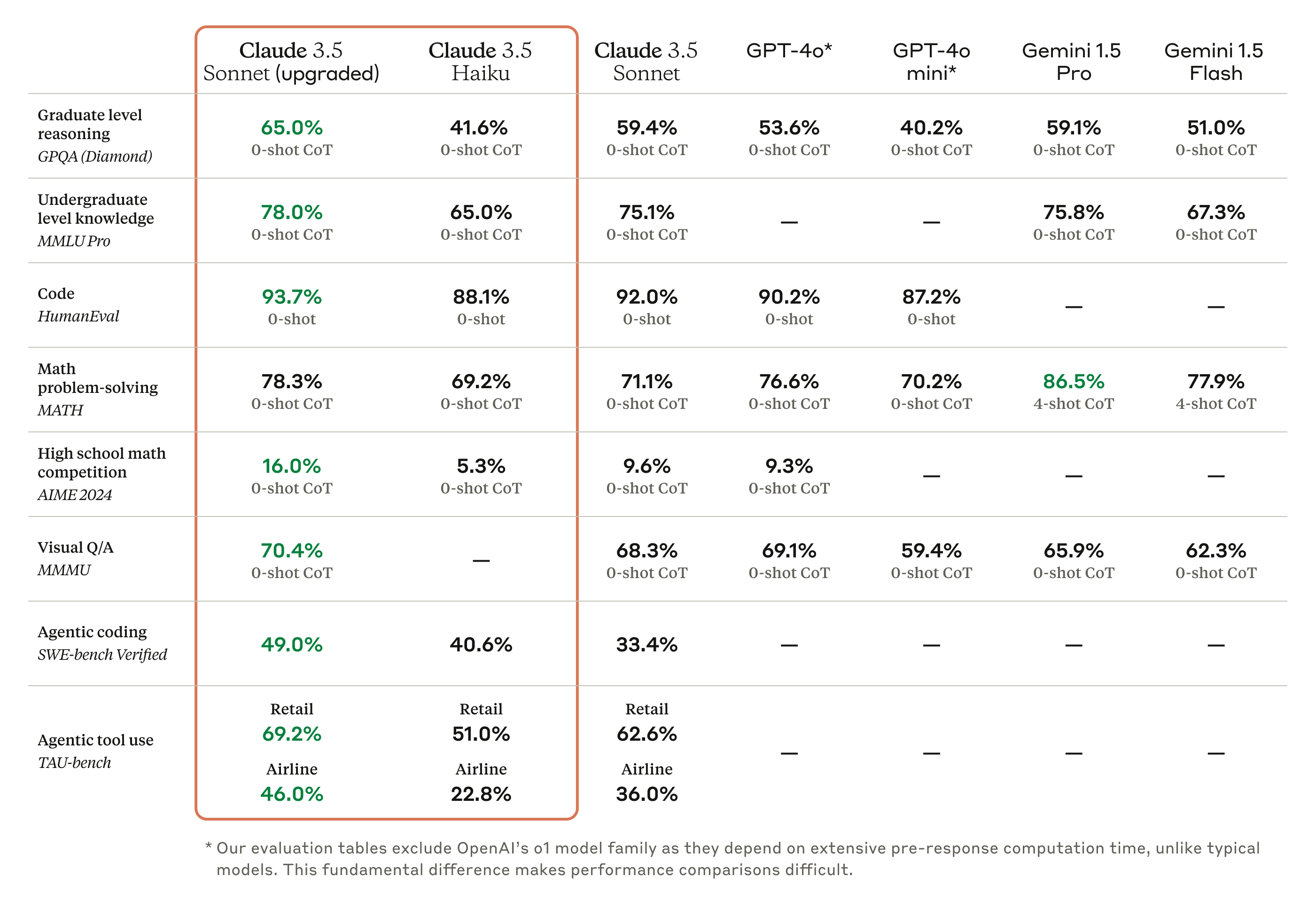

According to Anthropic, the upgraded Claude 3.5 Sonnet delivers across-the-board improvements over its predecessor, with significant gains in coding, an area where it already excelled. The upgraded Claude 3.5 Sonnet shows wide-ranging improvements on industry benchmarks. On coding, it improves performance on SWE-bench Verified from 33% to 49%, scoring higher than all publicly available models. It also improves performance on TAU-bench, an agentic tool use task, from 62.6% to 69.2% in the retail domain, and from 36.0% to 46.0% in the airline domain. The following table includes the model evaluations provided by Anthropic.

Computer use, a new frontier in AI interaction

Instead of restricting the model to use APIs, Claude has been trained on general computer skills, allowing it to use a wide range of standard tools and software programs. In this way, applications can use Claude to perceive and interact with computer interfaces. Software developers can integrate this API to enable Claude to translate prompts (for example, “find me a hotel in Rome”) into specific computer commands (open a browser, navigate this website, and so on).

More specifically, when invoking the model, software developers now have access to three new integrated tools that provide a virtual set of hands to operate a computer:

- Computer tool – This tool can receive as input a screenshot and a goal and returns a description of the mouse and keyboard actions that should be performed to achieve that goal. For example, this tool can ask to move the cursor to a specific position, click, type, and take screenshots.

- Text editor tool – Using this tool, the model can ask to perform operations like viewing file contents, creating new files, replacing text, and undoing edits.

- Bash tool – This tool returns commands that can be run on a computer system to interact at a lower level as a user typing in a terminal.

These tools open up a world of possibilities for automating complex tasks, from data analysis and software testing to content creation and system administration. Imagine an application powered by Claude 3.5 Sonnet interacting with the computer just as a human would, navigating through multiple desktop tools including terminals, text editors, internet browsers, and also capable of filling out forms and even debugging code.

We’re excited to help software developers explore these new capabilities with Amazon Bedrock. We expect this capability to improve rapidly in the coming months, and Claude’s current ability to use computers has limits. Some actions such as scrolling, dragging, or zooming can present challenges for Claude, and we encourage you to start exploring low-risk tasks.

When looking at OSWorld, a benchmark for multimodal agents in real computer environments, the upgraded Claude 3.5 Sonnet currently gets 14.9%. While human-level skill is far ahead with about 70-75%, this result is much better than the 7.7% obtained by the next-best model in the same category.

Using the upgraded Claude 3.5 Sonnet in the Amazon Bedrock console

To get started with the upgraded Claude 3.5 Sonnet, I navigate to the Amazon Bedrock console and choose Model access in the navigation pane. There, I request access for the new Claude 3.5 Sonnet V2 model.

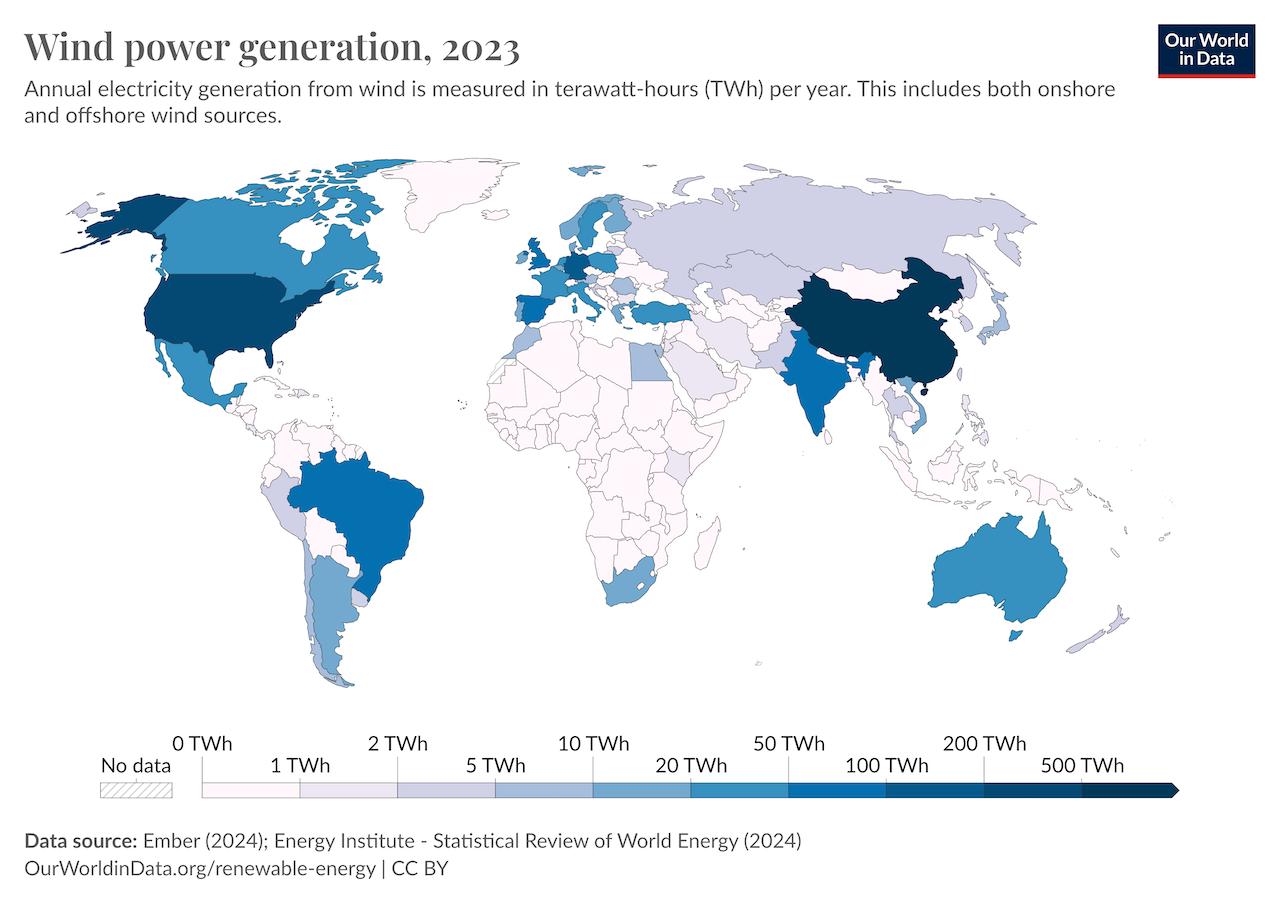

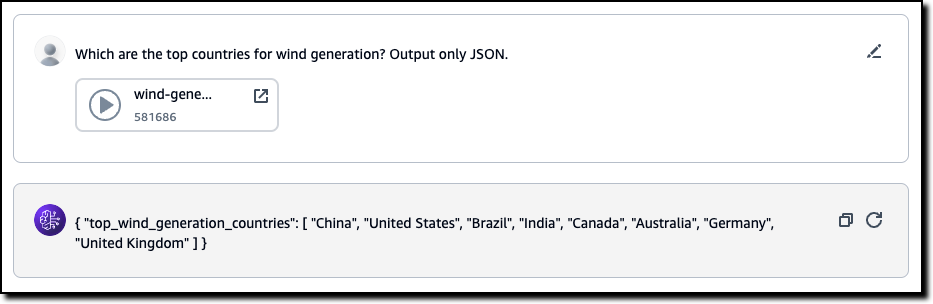

To test the new vision capability, I open another browser tab and download from the Our World in Data website the Wind power generation chart in PNG format.

Back in the Amazon Bedrock console, I choose Chat/text under Playgrounds in the navigation pane. For the model, I select Anthropic as the model provider and then Claude 3.5 Sonnet V2.

I use the three vertical dots in the input section of the chat to upload the image file from my computer. Then I enter this prompt:

Which are the top countries for wind power generation? Answer only in JSON.

The result follows my instructions and returns the list extracting the information from the image.

Using the upgraded Claude 3.5 Sonnet with AWS CLI and SDKs

Here’s a sample AWS Command Line Interface (AWS CLI) command using the Amazon Bedrock Converse API. I use the --query parameter of the CLI to filter the result and only show the text content of the output message:

In output, I get this text in the response.

An anchor! You throw an anchor out when you want to use it to stop a boat, but you take it in (pull it up) when you don't want to use it and want to move the boat.

The AWS SDKs implement a similar interface. For example, you can use the AWS SDK for Python (Boto3) to analyze the same image as in the console example:

import boto3

MODEL_ID = "anthropic.claude-3-5-sonnet-20241022-v2:0"

IMAGE_NAME = "wind-generation.png"

bedrock_runtime = boto3.client("bedrock-runtime")

with open(IMAGE_NAME, "rb") as f:

image = f.read()

user_message = "Which are the top countries for wind power generation? Answer only in JSON."

messages = [

{

"role": "user",

"content": [

{"image": {"format": "png", "source": {"bytes": image}}},

{"text": user_message},

],

}

]

response = bedrock_runtime.converse(

modelId=MODEL_ID,

messages=messages,

)

response_text = response["output"]["message"]["content"][0]["text"]

print(response_text)

Integrating computer use with your application

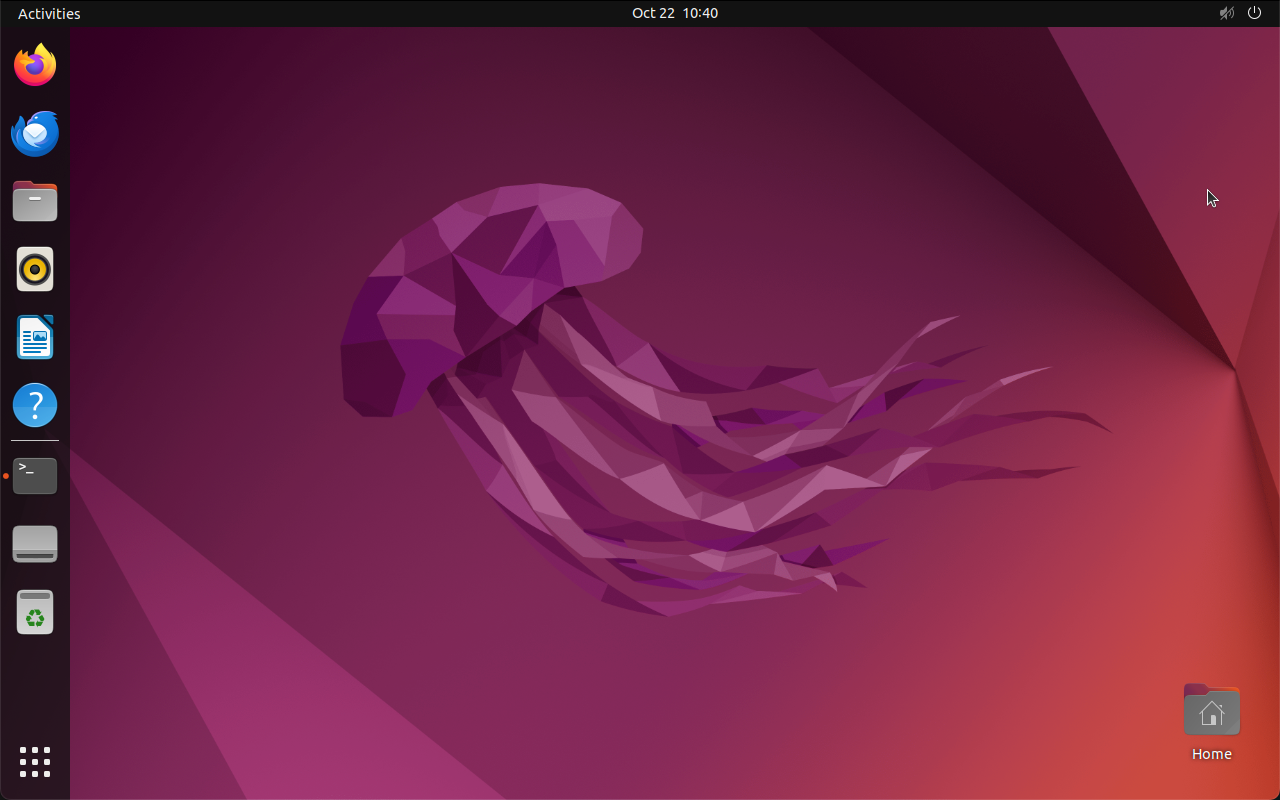

Let’s see how computer use works in practice. First, I take a snapshot of the desktop of a Ubuntu system:

This screenshot is the starting point for the steps that will be implemented by computer use. To see how that works, I run a Python script passing in input to the model the screenshot image and this prompt:

Find me a hotel in Rome.

This script invokes the upgraded Claude 3.5 Sonnet in Amazon Bedrock using the new syntax required for computer use:

import base64

import json

import boto3

MODEL_ID = "anthropic.claude-3-5-sonnet-20241022-v2:0"

IMAGE_NAME = "ubuntu-screenshot.png"

bedrock_runtime = boto3.client(

"bedrock-runtime",

region_name="us-east-1",

)

with open(IMAGE_NAME, "rb") as f:

image = f.read()

image_base64 = base64.b64encode(image).decode("utf-8")

prompt = "Find me a hotel in Rome."

body = {

"anthropic_version": "bedrock-2023-05-31",

"max_tokens": 512,

"temperature": 0.5,

"messages": [

{

"role": "user",

"content": [

{"type": "text", "text": prompt},

{

"type": "image",

"source": {

"type": "base64",

"media_type": "image/jpeg",

"data": image_base64,

},

},

],

}

],

"tools": [

{ # new

"type": "computer_20241022", # literal / constant

"name": "computer", # literal / constant

"display_height_px": 1280, # min=1, no max

"display_width_px": 800, # min=1, no max

"display_number": 0 # min=0, max=N, default=None

},

{ # new

"type": "bash_20241022", # literal / constant

"name": "bash", # literal / constant

},

{ # new

"type": "text_editor_20241022", # literal / constant

"name": "str_replace_editor", # literal / constant

}

],

"anthropic_beta": ["computer-use-2024-10-22"],

}

# Convert the native request to JSON.

request = json.dumps(body)

try:

# Invoke the model with the request.

response = bedrock_runtime.invoke_model(modelId=MODEL_ID, body=request)

except Exception as e:

print(f"ERROR: {e}")

exit(1)

# Decode the response body.

model_response = json.loads(response["body"].read())

print(model_response)

The body of the request includes new options:

anthropic_betawith value["computer-use-2024-10-22"]to enable computer use.- The

toolssection supports a newtypeoption (set tocustomfor the tools you configure). - Note that the computer tool needs to know the resolution of the screen (

display_height_pxanddisplay_width_px).

To follow my instructions with computer use, the model provides actions that operate on the desktop described by the input screenshot.

The response from the model includes a tool_use section from the computer tool that provides the first step. The model has found in the screenshot the Firefox browser icon and the position of the mouse arrow. Because of that, it now asks to move the mouse to specific coordinates to start the browser.

{

"id": "msg_bdrk_01WjPCKnd2LCvVeiV6wJ4mm3",

"type": "message",

"role": "assistant",

"model": "claude-3-5-sonnet-20241022",

"content": [

{

"type": "text",

"text": "I'll help you search for a hotel in Rome. I see Firefox browser on the desktop, so I'll use that to access a travel website.",

},

{

"type": "tool_use",

"id": "toolu_bdrk_01CgfQ2bmQsPFMaqxXtYuyiJ",

"name": "computer",

"input": {"action": "mouse_move", "coordinate": [35, 65]},

},

],

"stop_reason": "tool_use",

"stop_sequence": None,

"usage": {"input_tokens": 3443, "output_tokens": 106},

}This is just the first step. As with usual tool use requests, the script should reply with the result of using the tool (moving the mouse in this case). Based on the initial request to book a hotel, there would be a loop of tool use interactions that will ask to click on the icon, type a URL in the browser, and so on until the hotel has been booked.

A more complete example is available in this repository shared by Anthropic.

Things to know

The upgraded Claude 3.5 Sonnet is available today in Amazon Bedrock in the US West (Oregon) AWS Region and is offered at the same cost as the original Claude 3.5 Sonnet. For up-to-date information on regional availability, refer to the Amazon Bedrock documentation. For detailed cost information for each Claude model, visit the Amazon Bedrock pricing page.

In addition to the greater intelligence of the upgraded model, software developers can now integrate computer use (available in public beta) in their applications to automate complex desktop workflows, enhance software testing processes, and create more sophisticated AI-powered applications.

Claude 3.5 Haiku will be released in the coming weeks, initially as a text-only model and later with image input.

You can see how computer use can help with coding in this video with Alex Albert, Head of Developer Relations at Anthropic.

This other video describes computer use for automating operations.

To learn more about these new features, visit the Claude models section of the Amazon Bedrock documentation. Give the upgraded Claude 3.5 Sonnet a try in the Amazon Bedrock console today, and send feedback to AWS re:Post for Amazon Bedrock. You can find deep-dive technical content and discover how our Builder communities are using Amazon Bedrock at community.aws. Let us know what you build with these new capabilities!

– Danilo