Conversational AI is revolutionizing information access, offering a personalized, intuitive search experience that delights users and empowers businesses. A well-designed conversational agent acts as a knowledgeable guide, understanding user intent and effortlessly navigating vast data, which leads to happier, more engaged users, fostering loyalty and trust. Meanwhile, businesses benefit from increased efficiency, reduced costs, and a stronger bottom line. On the other hand, a poorly designed system can lead to frustration, confusion, and, ultimately, abandonment.

Achieving success with conversational AI requires more than just deploying a chatbot. To truly harness this technology, we must master the intricate dynamics of human-AI interaction. This involves understanding how users articulate needs, explore results, and refine queries, paving the way for a seamless and effective search experience.

This article will decode the three phases of conversational search, the challenges users face at each stage, and the strategies and best practices AI agents can employ to enhance the experience.

The Three Phases Of Conversational Search

Contents

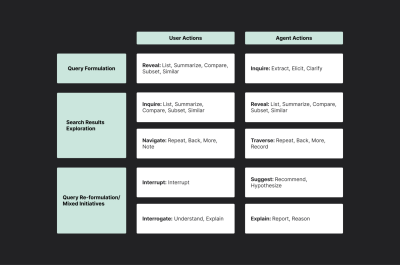

To analyze these complex interactions, Trippas et al. (2018) (PDF) proposed a framework that outlines three core phases in the conversational search process:

- Query formulation: Users express their information needs, often facing challenges in articulating them clearly.

- Search results exploration: Users navigate through presented results, seeking further information and refining their understanding.

- Query re-formulation: Users refine their search based on new insights, adapting their queries and exploring different avenues.

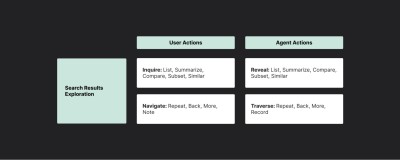

Building on this framework, Azzopardi et al. (2018) (PDF) identified five key user actions within these phases: reveal, inquire, navigate, interrupt, interrogate, and the corresponding agent actions — inquire, reveal, traverse, suggest, and explain.

In the following sections, I’ll break down each phase of the conversational search journey, delving into the actions users take and the corresponding strategies AI agents can employ, as identified by Azzopardi et al. (2018) (PDF). I’ll also share actionable tactics and real-world examples to guide the implementation of these strategies.

Phase 1: Query Formulation: The Art Of Articulation

In the initial phase of query formulation, users attempt to translate their needs into prompts. This process involves conscious disclosures — sharing details they believe are relevant — and unconscious non-disclosure — omitting information they may not deem important or struggle to articulate.

This process is fraught with challenges. As Jakob Nielsen aptly pointed out,

“Articulating ideas in written prose is hard. Most likely, half the population can’t do it. This is a usability problem for current prompt-based AI user interfaces.”

— Jakob Nielsen

This can manifest as:

- Vague language: “I need help with my finances.”

Budgeting? Investing? Debt management? - Missing details: “I need a new pair of shoes.”

What type of shoes? For what purpose? - Limited vocabulary: Not knowing the right technical terms. “I think I have a sprain in my ankle.”

The user might not know the difference between a sprain and a strain or the correct anatomical terms.

These challenges can lead to frustration for users and less relevant results from the AI agent.

AI Agent Strategies: Nudging Users Towards Better Input

To bridge the articulation gap, AI agents can employ three core strategies:

- Elicit: Proactively guide users to provide more information.

- Clarify: Seek to resolve ambiguities in the user’s query.

- Suggest: Offer alternative phrasing or search terms that better capture the user’s intent.

The key to effective query formulation is balancing elicitation and assumption. Overly aggressive questioning can frustrate users, and making too many assumptions can lead to inaccurate results.

For example,

User: “I need a new phone.”

AI: “What’s your budget? What features are important to you? What size screen do you prefer? What carrier do you use?…”

This rapid-fire questioning can overwhelm the user and make them feel like they’re being interrogated. A more effective approach is to start with a few open-ended questions and gradually elicit more details based on the user’s responses.

As Azzopardi et al. (2018) (PDF) stated in the paper,

“There may be a trade-off between the efficiency of the conversation and the accuracy of the information needed as the agent has to decide between how important it is to clarify and how risky it is to infer or impute the underspecified or missing details.”

Implementation Tactics And Examples

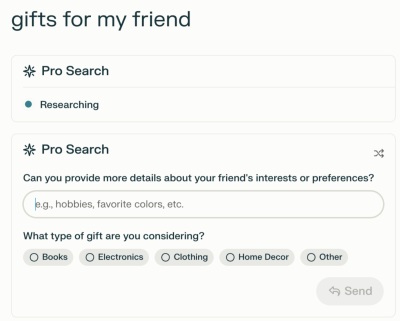

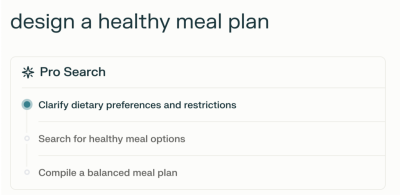

- Probing questions: Ask open-ended or clarifying questions to gather more details about the user’s needs. For example, Perplexity Pro uses probing questions to elicit more details about the user’s needs for gift recommendations.

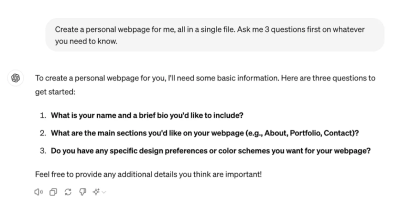

For example, after clicking one of the initial prompts, “Create a personal webpage,” ChatGPT added another sentence, “Ask me 3 questions first on whatever you need to know,” to elicit more details from the user.

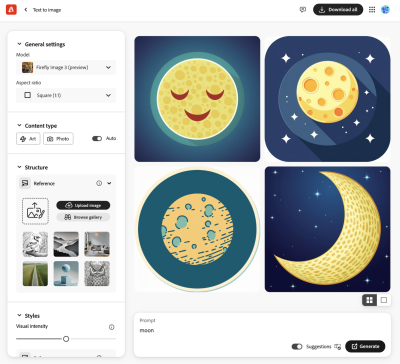

- Interactive refinement: Utilize visual aids like sliders, checkboxes, or image carousels to help users specify their preferences without articulating everything in words. For example, Adobe Firefly’s side settings allow users to adjust their preferences.

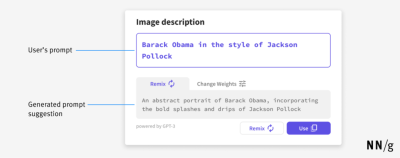

- Suggested prompts: Provide examples of more specific or detailed queries to help users refine their search terms. For example, Nelson Norman Group provides an interface that offers a suggested prompt to help users refine their initial query.

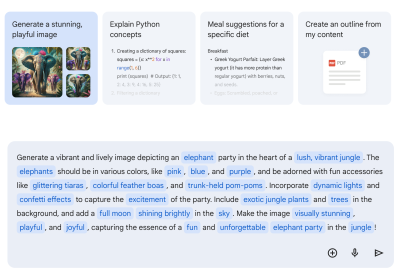

For example, after clicking one of the initial prompts in Gemini, “Generate a stunning, playful image,” more details are added in blue in the input.

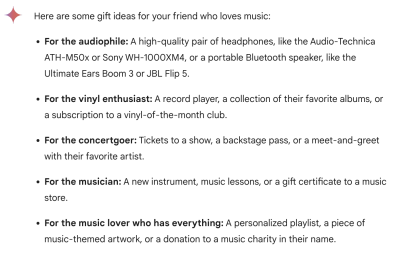

- Offering multiple interpretations: If the query is ambiguous, present several possible interpretations and let the user choose the most accurate one. For example, Gemini offers a list of gift suggestions for the query “gifts for my friend who loves music,” categorized by the recipient’s potential music interests to help the user pick the most relevant one.

Once the query is formed, the focus shifts to exploration. Users embark on a multifaceted journey through search results, seeking to understand their options and make informed decisions.

Two primary user actions mark this phase:

- Inquire: Users actively seek more information, asking for details, comparisons, summaries, or related options.

- Navigate: Users navigate the presented information, browse through lists, revisit previous options, or request additional results. This involves scrolling, clicking, and using voice commands like “next” or “previous.”

AI Agent Strategies: Facilitating Exploration And Discovery

To guide users through the vast landscape of information, AI agents can employ these strategies:

- Reveal: Present information that caters to diverse user needs and preferences.

- Traverse: Guide the user through the information landscape, providing intuitive navigation and responding to their evolving interests.

During discovery, it’s vital to avoid information overload, which can overwhelm users and hinder their decision-making. For example,

User: “I’m looking for a place to stay in Tokyo.”

AI: Provides a lengthy list of hotels without any organization or filtering options.

Instead, AI agents should offer the most relevant results and allow users to filter or sort them based on their needs. This might include presenting a few top recommendations based on ratings or popularity, with options to refine the search by price range, location, amenities, and so on.

Additionally, AI agents should understand natural language navigation. For example, if a user asks, “Tell me more about the second hotel,” the AI should provide additional details about that specific option without requiring the user to rephrase their query. This level of understanding is crucial for flexible navigation and a seamless user experience.

Implementation Tactics And Examples

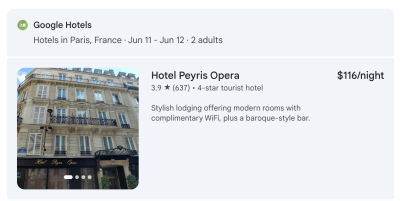

- Diverse formats: Offer results in various formats (lists, summaries, comparisons, images, videos) and allow users to specify their preferences. For example, Gemini presents a summarized format of hotel information, including a photo, price, rating, star rating, category, and brief description to allow the user to evaluate options quickly for the prompt “I’m looking for a place to stay in Paris.”

- Context-aware navigation: Maintain conversational context, remember user preferences, and provide relevant navigation options. For example, following the previous example prompt, Gemini reminds users of the potential next steps at the end of the response.

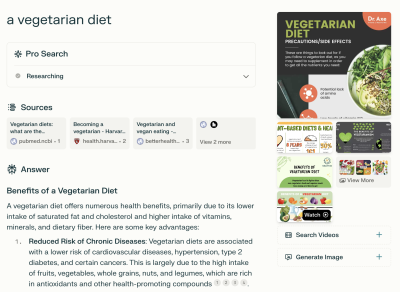

- Interactive exploration: Use carousels, clickable images, filter options, and other interactive elements to enhance the exploration experience. For example, Perplexity offers a carousel of images related to “a vegetarian diet” and other interactive elements like “Watch Videos” and “Generate Image” buttons to enhance exploration and discovery.

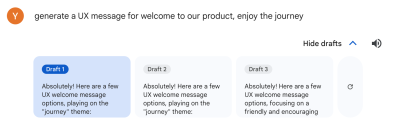

- Multiple responses: Present several variations of a response. For example, users can see multiple draft responses to the same query by clicking the “Show drafts” button in Gemini.

- Flexible text length and tone. Enable users to customize the length and tone of AI-generated responses to better suit their preferences. For example, Gemini provides multiple options for welcome messages, offering varying lengths, tones, and degrees of formality.

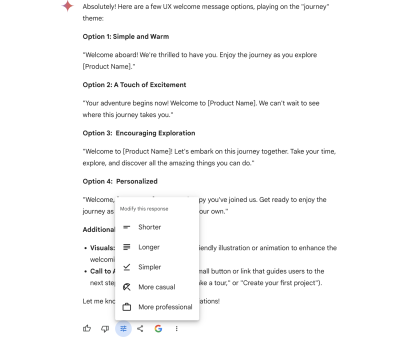

Phase 3: Query Re-formulation: Adapting To Evolving Needs

As users interact with results, their understanding deepens, and their initial query might not fully capture their evolving needs. During query re-formulation, users refine their search based on exploration and new insights, often involving interrupting and interrogating. Query re-formulation empowers users to course-correct and refine their search.

- Interrupt: Users might pause the conversation to:

- Correct: “Actually, I meant a desktop computer, not a laptop.”

- Add information: “I also need it to be good for video editing.”

- Change direction: “I’m not interested in those options. Show me something else.”

- Interrogate: Users challenge the AI to ensure it understands their needs and justify its recommendations:

- Seek understanding: “What do you mean by ‘good battery life’?”

- Request explanations: “Why are you recommending this particular model?”

AI Agent Strategies: Adapting And Explaining

To navigate the query re-formulation phase effectively, AI agents need to be responsive, transparent, and proactive. Two core strategies for AI agents:

- Suggest: Proactively offer alternative directions or options to guide the user towards a more satisfying outcome.

- Explain: Provide clear and concise explanations for recommendations and actions to foster transparency and build trust.

AI agents should balance suggestions with relevance and explain why certain options are suggested while avoiding overwhelming them with unrelated suggestions that increase conversational effort. A bad example would be the following:

User: “I want to visit Italian restaurants in New York.”

AI: Suggest unrelated options, like Mexican restaurants or American restaurants, when the user is interested in Italian cuisine.

This could frustrate the user and reduce trust in the AI.

A better answer could be, “I found these highly-rated Italian restaurants. Would you like to see more options based on different price ranges?” This ensures users understand the reasons behind recommendations, enhancing their satisfaction and trust in the AI’s guidance.

Implementation Tactics And Examples

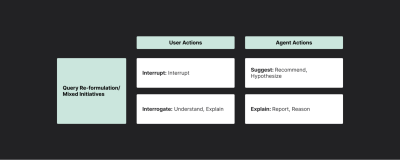

- Transparent system process: Show the steps involved in generating a response. For example, Perplexity Pro outlines the search process step by step to fulfill the user’s request.

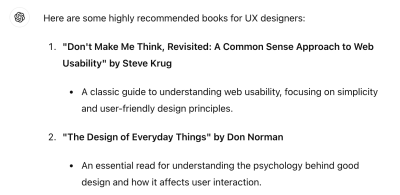

- Explainable recommendations: Clearly state the reasons behind specific recommendations, referencing user preferences, historical data, or external knowledge. For example, ChatGPT includes recommended reasons for each listed book in response to the question “books for UX designers.”

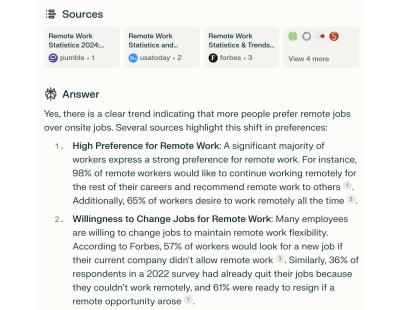

- Source reference: Enhance the answer with source references to strengthen the evidence supporting the conclusion. For example, Perplexity presents source references to support the answer.

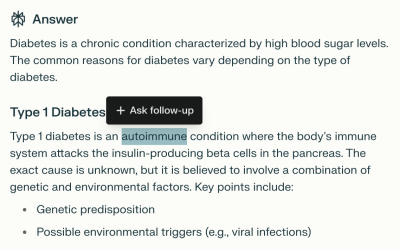

- Point-to-select: Users should be able to directly select specific elements or locations within the dialogue for further interaction rather than having to describe them verbally. For example, users can select part of an answer and ask a follow-up in Perplexity.

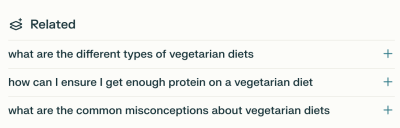

- Proactive recommendations: Suggest related or complementary items based on the user’s current selections. For example, Perplexity offers a list of related questions to guide the user’s exploration of “a vegetarian diet.”

Overcoming LLM Shortcomings

While the strategies discussed above can significantly improve the conversational search experience, LLMs still have inherent limitations that can hinder their intuitiveness. These include the following:

- Hallucinations: Generating false or nonsensical information.

- Lack of common sense: Difficulty understanding queries that require world knowledge or reasoning.

- Sensitivity to input phrasing: Producing different responses to slightly rephrased queries.

- Verbosity: Providing overly lengthy or irrelevant information.

- Bias: Reflecting biases present in the training data.

To create truly effective and user-centric conversational AI, it’s crucial to address these limitations and make interactions more intuitive. Here are some key strategies:

- Incorporate structured knowledge

Integrating external knowledge bases or databases can ground the LLM’s responses in facts, reducing hallucinations and improving accuracy. - Fine-tuning

Training the LLM on domain-specific data enhances its understanding of particular topics and helps mitigate bias. - Intuitive feedback mechanisms

Allow users to easily highlight and correct inaccuracies or provide feedback directly within the conversation. This could involve clickable elements to flag problematic responses or a “this is incorrect” button that prompts the AI to reconsider its output. - Natural language error correction

Develop AI agents capable of understanding and responding to natural language corrections. For example, if a user says, “No, I meant X,” the AI should be able to interpret this as a correction and adjust its response accordingly. - Adaptive learning

Implement machine learning algorithms that allow the AI to learn from user interactions and improve its performance over time. This could involve recognizing patterns in user corrections, identifying common misunderstandings, and adjusting behavior to minimize future errors.

Training AI Agents For Enhanced User Satisfaction

Understanding and evaluating user satisfaction is fundamental to building effective conversational AI agents. However, directly measuring user satisfaction in the open-domain search context can be challenging, as Zhumin Chu et al. (2022) highlighted. Traditionally, metrics like session abandonment rates or task completion were used as proxies, but these don’t fully capture the nuances of user experience.

To address this, Clemencia Siro et al. (2023) offer a comprehensive approach to gathering and leveraging user feedback:

- Identify key dialogue aspects

To truly understand user satisfaction, we need to look beyond simple metrics like “thumbs up” or “thumbs down.” Consider evaluating aspects like relevance, interestingness, understanding, task completion, interest arousal, and efficiency. This multi-faceted approach provides a more nuanced picture of the user’s experience. - Collect multi-level feedback

Gather feedback at both the turn level (each question-answer pair) and the dialogue level (the overall conversation). This granular approach pinpoints specific areas for improvement, both in individual responses and the overall flow of the conversation. - Recognize individual differences

Understand that the concept of satisfaction varies per user. Avoid assuming all users perceive satisfaction similarly. - Prioritize relevance

While all aspects are important, relevance (at the turn level) and understanding (at both the turn and session level) have been identified as key drivers of user satisfaction. Focus on improving the AI agent’s ability to provide relevant and accurate responses that demonstrate a clear understanding of the user’s intent.

Additionally, consider these practical tips for incorporating user satisfaction feedback into the AI agent’s training process:

- Iterate on prompts

Use user feedback to refine the prompts to elicit information and guide the conversation. - Refine response generation

Leverage feedback to improve the relevance and quality of the AI agent’s responses. - Personalize the experience

Tailor the conversation to individual users based on their preferences and feedback. - Continuously monitor and improve

Regularly collect and analyze user feedback to identify areas for improvement and iterate on the AI agent’s design and functionality.

The Future Of Conversational Search: Beyond The Horizon

The evolution of conversational search is far from over. As AI technologies continue to advance, we can anticipate exciting developments:

- Multi-modal interactions

Conversational search will move beyond text, incorporating voice, images, and video to create more immersive and intuitive experiences. - Personalized recommendations

AI agents will become more adept at tailoring search results to individual users, considering their past interactions, preferences, and context. This could involve suggesting restaurants based on dietary restrictions or recommending movies based on previously watched titles. - Proactive assistance

Conversational search systems will anticipate user needs and proactively offer information or suggestions. For instance, an AI travel agent might suggest packing tips or local customs based on a user’s upcoming trip.

(yk)